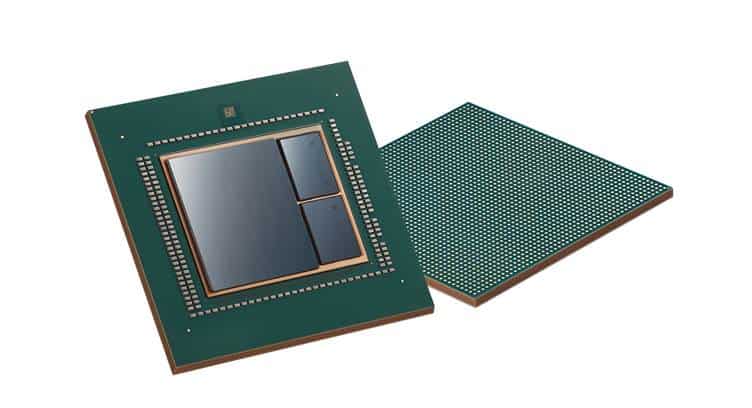

Baidu and Samsung Electronics announced that Baidu’s first cloud-to-edge AI accelerator, Baidu KUNLUN, has completed its development and will be mass-produced early next year.

Baidu KUNLUN chip is built on the company’s advanced XPU, a home-grown neural processor architecture for cloud, edge, and AI, as well as Samsung’s 14-nanometer (nm) process technology with its I-Cube (Interposer-Cube) package solution.

The chip offers 512 gigabytes per second (GBps) memory bandwidth and supplies up to 260 Tera operations per second (TOPS) at 150 watts. In addition, the new chip allows Ernie, a pre-training model for natural language processing, to infer three times faster than the conventional GPU/FPGA-accelerating model.

Leveraging the chip’s limit-pushing computing power and power efficiency, Baidu can effectively support a wide variety of functions including large-scale AI workloads, such as search ranking, speech recognition, image processing, natural language processing, autonomous driving, and deep learning platforms like PaddlePaddle.

Through the first foundry cooperation between the two companies, Baidu will provide advanced AI platforms for maximizing AI performance, and Samsung will expand its foundry business into high performance computing (HPC) chips that are designed for cloud and edge computing.

OuYang Jian, Distinguished Architect, Baidu

Baidu KUNLUN is a very challenging project since it requires not only a high level of reliability and performance at the same time, but is also a compilation of the most advanced technologies in the semiconductor industry.

Ryan Lee, VP of Foundry Marketing, Samsung

Baidu KUNLUN is an important milestone for Samsung Foundry as we’re expanding our business area beyond mobile to datacenter applications by developing and mass-producing AI chips.